- Chapter 4 of the 2018 National Climate Assessment looks at the potential climate impacts on the US energy system.

- Flow of Flows — Orchestrating ELT with Prefect and dbt. More exploration of how to build data processing pipelines using open source tooling.

- Orchestrating Airbyte data connection tasks with Prefect. Official integrations for Airbyte connectors as Prefect tasks.

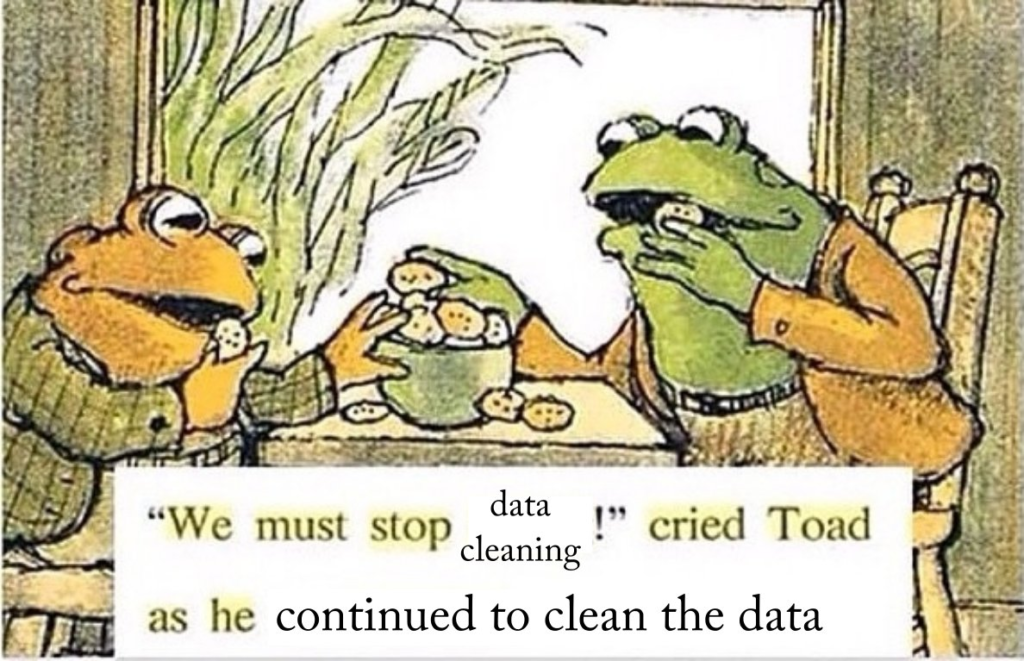

- Data cleaning IS analysis, not grunt work. A longish post exploring what we really get out of doing data cleaning, and why it’s more valuable and complex than it often gets credit for.

- Peer learnings about what it means to become an open data steward, from the 2021 ODI Open Data Summit. Videos and responses from participants on many facets of stewarding open data, especially as a business / organization.

Tag: data cleaning

Automated Data Wrangling

We work with a lot of messy public data. In theory it’s already “structured” and published in machine readable forms like Microsoft Excel spreadsheets, poorly designed databases, and CSV files with no associated schema. In practice it ranges from almost unstructured to… almost structured. Someone working on one of our take-home questions for the data wrangler & analyst position recently noted of the FERC Form 1: “This database is not really a database – more like a bespoke digitization of a paper form that happened to be built using a database.” And I mean, yeah. Pretty much. The more messy datasets I look at, the more I’ve started to question Hadley Wickham’s famous Tolstoy quip about the uniqueness of messy data. There’s a taxonomy of different kinds of messes that go well beyond what you can easily fix with a few nifty dataframe manipulations. It seems like we should be able to develop higher level, more general tools for doing automated data wrangling. Given how much time highly skilled people pour into this kind of computational toil, it seems like it would be very worthwhile.

Like families, tidy datasets are all alike but every messy dataset is messy in its own way.

Hadley Wickham, paraphrasing Leo Tolstoy in Tidy Data